Compare commits

1319 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

958a4565db | ||

|

|

2403a03fcb | ||

|

|

a01402fa46 | ||

|

|

8b0cfe1236 | ||

|

|

39a739eadb | ||

|

|

a44a235b56 | ||

|

|

6634229cc1 | ||

|

|

fcb5d1cb5a | ||

|

|

dd628eb525 | ||

|

|

1e41c773a0 | ||

|

|

22b42e91f3 | ||

|

|

b9f35cadb3 | ||

|

|

59a723aec8 | ||

|

|

71f7d68783 | ||

|

|

6686489c06 | ||

|

|

c3e74e6e8d | ||

|

|

2b70c3d0c0 | ||

|

|

9204f01312 | ||

|

|

86c5ac44e4 | ||

|

|

2ef4534fee | ||

|

|

efe4fd3e24 | ||

|

|

01126c43f7 | ||

|

|

753d1b2aad | ||

|

|

b2d664c63c | ||

|

|

53d46a0385 | ||

|

|

1fd223c815 | ||

|

|

f2a356a80c | ||

|

|

6636f17e0f | ||

|

|

9e48e6a40b | ||

|

|

205ab26e92 | ||

|

|

70df037690 | ||

|

|

32faad9333 | ||

|

|

a6d78a8615 | ||

|

|

fe7108ae75 | ||

|

|

78b161e14c | ||

|

|

6036018f35 | ||

|

|

1b0f37a93c | ||

|

|

5f38a574ce | ||

|

|

5ce1c69803 | ||

|

|

60ba921f56 | ||

|

|

96d8882f1e | ||

|

|

f55d5ffd8c | ||

|

|

914b6247e4 | ||

|

|

da87e9cbb3 | ||

|

|

484b147a89 | ||

|

|

015be770c3 | ||

|

|

93d2f7fc85 | ||

|

|

7844157a90 | ||

|

|

6e046884af | ||

|

|

a784f63e9a | ||

|

|

ff9ed1abad | ||

|

|

354d3c0cda | ||

|

|

eeb177110e | ||

|

|

70848a258d | ||

|

|

3958e53aaa | ||

|

|

dfa7d1fc27 | ||

|

|

2dc34779d5 | ||

|

|

f6d832c6d9 | ||

|

|

87a3115073 | ||

|

|

085f81ec9e | ||

|

|

0ec38e0cfd | ||

|

|

cdd4745693 | ||

|

|

1fb2e9558f | ||

|

|

5b3f031590 | ||

|

|

4511634f3a | ||

|

|

738e95b875 | ||

|

|

b6e8b9df35 | ||

|

|

52ec0d1046 | ||

|

|

7563050f17 | ||

|

|

0da0600836 | ||

|

|

54ddc1a4c2 | ||

|

|

aa3da092a0 | ||

|

|

63efb3ff3e | ||

|

|

665cf4431d | ||

|

|

01d45ed12e | ||

|

|

7b8b73e651 | ||

|

|

692c6bf1fd | ||

|

|

88d6a7fbff | ||

|

|

9c6b745f06 | ||

|

|

b9d48c3278 | ||

|

|

1389c8f5b6 | ||

|

|

733f716819 | ||

|

|

bc359675a2 | ||

|

|

09f8904545 | ||

|

|

08ef5ad2d8 | ||

|

|

1c6f966579 | ||

|

|

16af10a5bc | ||

|

|

b7553d20d4 | ||

|

|

7d84ef2e2c | ||

|

|

521381ebf0 | ||

|

|

790534e0f8 | ||

|

|

cfa5b3f12c | ||

|

|

277245c69d | ||

|

|

b420614d65 | ||

|

|

975bf8fe88 | ||

|

|

47b3143534 | ||

|

|

42eb508515 | ||

|

|

76a3e97e05 | ||

|

|

70a77ba3d9 | ||

|

|

1fada53ddd | ||

|

|

85b43ec1a1 | ||

|

|

fde469a253 | ||

|

|

075e9b8526 | ||

|

|

46e8d9a5e4 | ||

|

|

18fab86431 | ||

|

|

0461a89348 | ||

|

|

f70b0bab80 | ||

|

|

66910bfe63 | ||

|

|

9c38c27eed | ||

|

|

72c34291e3 | ||

|

|

3983368228 | ||

|

|

83ca168bb8 | ||

|

|

c615e1bc62 | ||

|

|

b9667f50cf | ||

|

|

e7902bffa0 | ||

|

|

e0883a4ea0 | ||

|

|

819bc71941 | ||

|

|

463cf66881 | ||

|

|

c8d7c2caac | ||

|

|

8d182768f9 | ||

|

|

0b0e7eaf96 | ||

|

|

24690c1918 | ||

|

|

3b44dc52e1 | ||

|

|

ea6bc47d7a | ||

|

|

5dde011b31 | ||

|

|

a1e4fbf313 | ||

|

|

1ac81aa316 | ||

|

|

c865814a8e | ||

|

|

4c0fda400f | ||

|

|

fa89368c02 | ||

|

|

96d2f61812 | ||

|

|

b8c1cf0107 | ||

|

|

15a1c59a91 | ||

|

|

a73e4f8e41 | ||

|

|

2fb7a3091d | ||

|

|

711b6b1a1a | ||

|

|

a5b438e41e | ||

|

|

1dd56e35d5 | ||

|

|

e4b7bcaeab | ||

|

|

e818797427 | ||

|

|

c0bdb71810 | ||

|

|

f2b6ff910f | ||

|

|

09ee9089fb | ||

|

|

e6af9a6903 | ||

|

|

c3f159bd57 | ||

|

|

22241c55d5 | ||

|

|

15e85797c2 | ||

|

|

6c32331740 | ||

|

|

053ab12ba6 | ||

|

|

c7e1719215 | ||

|

|

686b72a82d | ||

|

|

398b2946b5 | ||

|

|

490c3a30ed | ||

|

|

3caf0f9df3 | ||

|

|

b7b74a430c | ||

|

|

4ae9b48d89 | ||

|

|

dba7d7fd65 | ||

|

|

a0c348cf97 | ||

|

|

ce892d4cde | ||

|

|

6fb5fbdd30 | ||

|

|

bc79027cf4 | ||

|

|

2581acd75e | ||

|

|

baa0af68b2 | ||

|

|

025ff27dd2 | ||

|

|

96c279f86c | ||

|

|

4b708caa6a | ||

|

|

3f6d427084 | ||

|

|

006b11e5d5 | ||

|

|

8961b8d560 | ||

|

|

ad846cdb76 | ||

|

|

464d99808f | ||

|

|

d442383a15 | ||

|

|

a29402ddde | ||

|

|

3a9ec76c91 | ||

|

|

a5e96881f4 | ||

|

|

c08a89378d | ||

|

|

e7513c96b3 | ||

|

|

24f1dc4ecc | ||

|

|

044cf8bb2e | ||

|

|

22ac291c3a | ||

|

|

c9c128f781 | ||

|

|

8d9284a524 | ||

|

|

7a2b4dbb99 | ||

|

|

58de20af0f | ||

|

|

31be707cc8 | ||

|

|

3e38c1b0bd | ||

|

|

7d448fd4ac | ||

|

|

1f192be43b | ||

|

|

b1b76a2dbe | ||

|

|

23cc21ce59 | ||

|

|

61acbf21d0 | ||

|

|

7075b00e20 | ||

|

|

7c18ec4053 | ||

|

|

e09fbe9e53 | ||

|

|

d36da95941 | ||

|

|

82ac8cb41f | ||

|

|

0b92c30abd | ||

|

|

5aaab75d1c | ||

|

|

1ac6ec1446 | ||

|

|

b682fc446e | ||

|

|

c190d57f1a | ||

|

|

3918f4abbd | ||

|

|

3b827ee60a | ||

|

|

49989012ab | ||

|

|

f6545ebdb8 | ||

|

|

e3a5b97b45 | ||

|

|

9513c39a17 | ||

|

|

3bcb47d75d | ||

|

|

902afc2f02 | ||

|

|

da253f12fe | ||

|

|

0e61c2d057 | ||

|

|

df701b5862 | ||

|

|

2312b86a66 | ||

|

|

ccc0ad6f64 | ||

|

|

923f73a516 | ||

|

|

fb4b73ce89 | ||

|

|

b427c7ff13 | ||

|

|

d93bb82193 | ||

|

|

aa1bf2adbd | ||

|

|

cc885e25ac | ||

|

|

de690b0a69 | ||

|

|

dd4e44931e | ||

|

|

f7502bcc92 | ||

|

|

2cae3c42e6 | ||

|

|

ace9626483 | ||

|

|

c0d60c63ab | ||

|

|

ed004236ce | ||

|

|

e7cb1b7375 | ||

|

|

91d0c91287 | ||

|

|

adc8ee88e2 | ||

|

|

573964b19f | ||

|

|

53251e7140 | ||

|

|

ce2c9bf26d | ||

|

|

736884c5a9 | ||

|

|

b5c5a95b64 | ||

|

|

4289c5c684 | ||

|

|

5a16d5a512 | ||

|

|

e7de812948 | ||

|

|

aef086b02e | ||

|

|

02646a4a08 | ||

|

|

9a82898d6b | ||

|

|

77b3b8a134 | ||

|

|

20b4134787 | ||

|

|

8a18609be4 | ||

|

|

0c7d862aae | ||

|

|

05fb4de68b | ||

|

|

21649712a9 | ||

|

|

001b6c087a | ||

|

|

77b6025f12 | ||

|

|

71c88244fe | ||

|

|

97c077171a | ||

|

|

a45a35f38c | ||

|

|

7fd3f98ae8 | ||

|

|

11a2eb6cc5 | ||

|

|

ea64f43e52 | ||

|

|

67c722c9c8 | ||

|

|

e48e82232d | ||

|

|

0b2104fc7a | ||

|

|

5182f755f1 | ||

|

|

6ded2d5b7c | ||

|

|

d3780b931c | ||

|

|

d1998f7ed0 | ||

|

|

eff8cd7ecb | ||

|

|

daf015d007 | ||

|

|

82aecc81f3 | ||

|

|

aaa5349003 | ||

|

|

78e129034e | ||

|

|

eb8bde37c1 | ||

|

|

bfa859e618 | ||

|

|

5250189f77 | ||

|

|

47a30047eb | ||

|

|

b16f57cb0d | ||

|

|

c172ce1011 | ||

|

|

45d68222a1 | ||

|

|

fdc82f8302 | ||

|

|

f8f1ade163 | ||

|

|

2687633941 | ||

|

|

b12dd15f4f | ||

|

|

07763d0d4f | ||

|

|

ce8fbbf743 | ||

|

|

60d782e5c5 | ||

|

|

a42a060ab5 | ||

|

|

a3799c4d5d | ||

|

|

29b7b014e5 | ||

|

|

db1d367941 | ||

|

|

26de992d20 | ||

|

|

05ec5c5e54 | ||

|

|

9545402452 | ||

|

|

29e41cc817 | ||

|

|

7675187c37 | ||

|

|

cffc769549 | ||

|

|

c6e121ffb4 | ||

|

|

a8541d86fb | ||

|

|

debc73b654 | ||

|

|

c2a3e2776e | ||

|

|

987bbb8e12 | ||

|

|

df4a5a7573 | ||

|

|

c3d06257be | ||

|

|

8bf056ca39 | ||

|

|

51a6b4289f | ||

|

|

fe1b8515a8 | ||

|

|

55360b4c08 | ||

|

|

febd809119 | ||

|

|

29225e4baf | ||

|

|

778833f90e | ||

|

|

95327750dc | ||

|

|

eae82d0222 | ||

|

|

95d3009a95 | ||

|

|

9df10c6b5b | ||

|

|

ae0d6f63fa | ||

|

|

87e5460aed | ||

|

|

895ebbfd18 | ||

|

|

694bea133b | ||

|

|

3b90bdf980 | ||

|

|

d75e0a9820 | ||

|

|

707a4e7c9e | ||

|

|

f3154a4313 | ||

|

|

e9b7e1e600 | ||

|

|

70dcff3b23 | ||

|

|

dce16909b4 | ||

|

|

f82724bbc1 | ||

|

|

3013282dbf | ||

|

|

97064a9ce3 | ||

|

|

79b650258e | ||

|

|

ed230dd750 | ||

|

|

372be54252 | ||

|

|

b4ded59c63 | ||

|

|

a75fa26caf | ||

|

|

7a696f58f9 | ||

|

|

946d4c7cfc | ||

|

|

4e68626bcb | ||

|

|

d830105605 | ||

|

|

153336d424 | ||

|

|

659870312d | ||

|

|

cbb05354a8 | ||

|

|

a4bada3ebe | ||

|

|

61693f6c8b | ||

|

|

e6ebc0443e | ||

|

|

369c6da5d8 | ||

|

|

15424169ad | ||

|

|

09e5fb2f55 | ||

|

|

c2eaa3d2cd | ||

|

|

bad15f077c | ||

|

|

dc82675f00 | ||

|

|

fc31c890e3 | ||

|

|

d046f0cc5e | ||

|

|

dba7a7257d | ||

|

|

845cecd38f | ||

|

|

4da96bc511 | ||

|

|

15752ce3c2 | ||

|

|

ff4cc5d316 | ||

|

|

9852733ef7 | ||

|

|

f57ecb1861 | ||

|

|

8711b7d99f | ||

|

|

995be90f91 | ||

|

|

046ae18411 | ||

|

|

dd8288c090 | ||

|

|

28b4773083 | ||

|

|

d4e8ab1cac | ||

|

|

d70650b074 | ||

|

|

f22b140782 | ||

|

|

08d3ac7ef8 | ||

|

|

59624181bd | ||

|

|

c84d54b35e | ||

|

|

efbd83c56d | ||

|

|

a2a0d35a24 | ||

|

|

3273881282 | ||

|

|

cc3ead9d7b | ||

|

|

f31106dc61 | ||

|

|

31ddec8348 | ||

|

|

2595e40e47 | ||

|

|

0adfa4d9ef | ||

|

|

7bac054668 | ||

|

|

229e8864bb | ||

|

|

bc760b7eb2 | ||

|

|

a0b9388757 | ||

|

|

324e54c015 | ||

|

|

3f149c4067 | ||

|

|

ad25a4cb56 | ||

|

|

fb4e8430cd | ||

|

|

e213d0ad55 | ||

|

|

56b17e6f3c | ||

|

|

4c68bec171 | ||

|

|

ea112fb583 | ||

|

|

55cf378ec2 | ||

|

|

897f18a8c8 | ||

|

|

7b105532d1 | ||

|

|

4abc26b582 | ||

|

|

c9d46a5237 | ||

|

|

0806f253b1 | ||

|

|

4b8132f3c6 | ||

|

|

47b52d4bab | ||

|

|

40969f20bf | ||

|

|

93340f546b | ||

|

|

b7f5beea40 | ||

|

|

c0080f2241 | ||

|

|

43343d0e55 | ||

|

|

3ce46ff09e | ||

|

|

fba3c3c649 | ||

|

|

bc87171243 | ||

|

|

f93a3a5fca | ||

|

|

98d0ad76bf | ||

|

|

d5933fb2af | ||

|

|

1b49e45222 | ||

|

|

ab587747fb | ||

|

|

520ee3f7a1 | ||

|

|

1885deb632 | ||

|

|

70b7a254af | ||

|

|

61c41fd919 | ||

|

|

83cac7bee2 | ||

|

|

6e691a016d | ||

|

|

88e10f7306 | ||

|

|

fff39eff9e | ||

|

|

95f5218ceb | ||

|

|

2eb1d18c2a | ||

|

|

f3d46613ee | ||

|

|

81c1aa3c13 | ||

|

|

8a3cffcd1b | ||

|

|

62f7606d2c | ||

|

|

8fa6e8b4ba | ||

|

|

c91e23dc50 | ||

|

|

7682c9ace7 | ||

|

|

24a786bedd | ||

|

|

80845807e1 | ||

|

|

a02d02ac12 | ||

|

|

50d630a155 | ||

|

|

a1cff377ec | ||

|

|

c2d6a0e891 | ||

|

|

3acc869570 | ||

|

|

5c4f60f376 | ||

|

|

e97468964a | ||

|

|

36dc9be7aa | ||

|

|

32c3f62934 | ||

|

|

5559e605b8 | ||

|

|

40f00196eb | ||

|

|

accc629e32 | ||

|

|

98c8a447b2 | ||

|

|

afcb0bec00 | ||

|

|

0b21750e76 | ||

|

|

ac0f484918 | ||

|

|

3205788bce | ||

|

|

8033e0bf23 | ||

|

|

183dec866a | ||

|

|

e694ea1cfd | ||

|

|

ca4dd58642 | ||

|

|

8f86b0deaa | ||

|

|

e7337728bf | ||

|

|

c9a6dc88a1 | ||

|

|

6c5e48dd4f | ||

|

|

a99c126266 | ||

|

|

4e5d60fdc9 | ||

|

|

921a7ef216 | ||

|

|

286bd0c40b | ||

|

|

c43935e82a | ||

|

|

88d769d801 | ||

|

|

d43c146676 | ||

|

|

78f77f6d35 | ||

|

|

ac2e8d760e | ||

|

|

b609dbcd86 | ||

|

|

9c051958a6 | ||

|

|

714d9534b6 | ||

|

|

75e190ff1d | ||

|

|

ed0f8b1189 | ||

|

|

99d5fbc9c0 | ||

|

|

0daa9d3e57 | ||

|

|

7365d23db8 | ||

|

|

df538f9cd6 | ||

|

|

9d261c88e6 | ||

|

|

8a1c95247d | ||

|

|

ea523136fc | ||

|

|

d2ef248781 | ||

|

|

f07ad7aa87 | ||

|

|

cb63d5e3df | ||

|

|

5f820ab0a6 | ||

|

|

2c6fb617a6 | ||

|

|

921f3899f0 | ||

|

|

41eeb99177 | ||

|

|

46be1b8778 | ||

|

|

05a5ae4fcf | ||

|

|

9d184586f1 | ||

|

|

9347677c60 | ||

|

|

3bb4f2c7c2 | ||

|

|

f6bfd89cef | ||

|

|

423af371c0 | ||

|

|

4172f92bfc | ||

|

|

8b2535a8da | ||

|

|

8d2e22f009 | ||

|

|

004bf31142 | ||

|

|

3eb2131d0b | ||

|

|

bf07d8fe87 | ||

|

|

357000c478 | ||

|

|

d03dfb3934 | ||

|

|

ed64e4299b | ||

|

|

415780a4fe | ||

|

|

7b8a5585dd | ||

|

|

7c4dd4c48c | ||

|

|

40e2da10f3 | ||

|

|

e53e530874 | ||

|

|

2e642593e5 | ||

|

|

29efe75a6f | ||

|

|

1c7f60103d | ||

|

|

4ef2ed2f1b | ||

|

|

fada432f49 | ||

|

|

b657a4df23 | ||

|

|

ca2029a46b | ||

|

|

cdc58058d7 | ||

|

|

4141d165ff | ||

|

|

ef409dd345 | ||

|

|

fea63fba12 | ||

|

|

8ce6b18318 | ||

|

|

0669d93f56 | ||

|

|

5c164efdb6 | ||

|

|

b9ba94d644 | ||

|

|

bf992fd9df | ||

|

|

f9d3775d4c | ||

|

|

9a3a2f9013 | ||

|

|

8e8f026ea7 | ||

|

|

ed03ef47ef | ||

|

|

ec3179156c | ||

|

|

3fc92b1b21 | ||

|

|

64f89af69e | ||

|

|

6ac1aa15f5 | ||

|

|

f8e35d8760 | ||

|

|

523d8a84a8 | ||

|

|

7d6b3d0e02 | ||

|

|

0600c4d70e | ||

|

|

2bba071b6a | ||

|

|

a4901ae9a7 | ||

|

|

04ec44edc3 | ||

|

|

50d368f3ec | ||

|

|

0bb8c8feba | ||

|

|

9b3032390c | ||

|

|

c06b524b4e | ||

|

|

7c6c2c4d6e | ||

|

|

7b998378ce | ||

|

|

2bc78fd045 | ||

|

|

fa158ba8de | ||

|

|

9d453ffa08 | ||

|

|

6aac4f9990 | ||

|

|

d5e45d9c43 | ||

|

|

719fa6f8e1 | ||

|

|

c98786a4f6 | ||

|

|

b1d34dba94 | ||

|

|

5070a04a82 | ||

|

|

9086176f73 | ||

|

|

494e0529d2 | ||

|

|

607455919e | ||

|

|

819cc9c0e4 | ||

|

|

58b18770e3 | ||

|

|

9313a2d294 | ||

|

|

59b0fd1166 | ||

|

|

ea5f41aa6d | ||

|

|

2e1061af64 | ||

|

|

aab59a8caf | ||

|

|

c98e7ea055 | ||

|

|

b7167ec880 | ||

|

|

5b733a723d | ||

|

|

81f7d77d74 | ||

|

|

2499276fca | ||

|

|

e52f82b565 | ||

|

|

b39508f64d | ||

|

|

d9acdc9767 | ||

|

|

2dc46ca0b8 | ||

|

|

dbc3376fe9 | ||

|

|

da9dac64f2 | ||

|

|

514f7d491c | ||

|

|

647f9b5460 | ||

|

|

4cac67fd66 | ||

|

|

6f0721ae2b | ||

|

|

fe8083c7f8 | ||

|

|

6da3fa08e4 | ||

|

|

edc9a42a4c | ||

|

|

14fb499a71 | ||

|

|

5820fc3b44 | ||

|

|

fe0a64154d | ||

|

|

d993216ec2 | ||

|

|

f589e13cf2 | ||

|

|

0a8a0c66b4 | ||

|

|

dd21d963fc | ||

|

|

a7fa84f681 | ||

|

|

05e8abb934 | ||

|

|

9a8d03b1f5 | ||

|

|

0555d7783c | ||

|

|

b16bb23cc8 | ||

|

|

92d189a84f | ||

|

|

eda9464d30 | ||

|

|

2db5cc177d | ||

|

|

bd3a6ba2fe | ||

|

|

8ac8d53c32 | ||

|

|

a6077ac7f4 | ||

|

|

c1d4078518 | ||

|

|

d25ec6d0b8 | ||

|

|

07aa372e2a | ||

|

|

c5e6520fee | ||

|

|

4ff0ef7359 | ||

|

|

f2fdc21374 | ||

|

|

906c7b92fe | ||

|

|

ffb39a5029 | ||

|

|

df8c9fc4e1 | ||

|

|

106131ff0f | ||

|

|

93e1410ed9 | ||

|

|

6c7d02cb18 | ||

|

|

3c1380fbc6 | ||

|

|

86f4077024 | ||

|

|

f2bc35e058 | ||

|

|

0a5225695a | ||

|

|

74471e41db | ||

|

|

8b1798522c | ||

|

|

7de7425e24 | ||

|

|

37dff8dc82 | ||

|

|

0c69a08863 | ||

|

|

f6e058a327 | ||

|

|

d60127a6d8 | ||

|

|

11a8151653 | ||

|

|

e3abaaa1b7 | ||

|

|

7dfbd432d1 | ||

|

|

82ef97af7e | ||

|

|

74fdda6846 | ||

|

|

9eaf0400fa | ||

|

|

01185ab483 | ||

|

|

8405bf767b | ||

|

|

9a9d1a8974 | ||

|

|

0ef2c812db | ||

|

|

85d1b433bc | ||

|

|

d8f616cf35 | ||

|

|

870c25c81f | ||

|

|

fb3bc189b5 | ||

|

|

6510c8d330 | ||

|

|

efee148e43 | ||

|

|

8b7dc031f7 | ||

|

|

963f38a690 | ||

|

|

45db2347dc | ||

|

|

4840c7d2fd | ||

|

|

92dbb0d366 | ||

|

|

68bafa9517 | ||

|

|

051b99791d | ||

|

|

b5d0bc997d | ||

|

|

ca88ea50c5 | ||

|

|

2b07d34611 | ||

|

|

8bf0bf10c5 | ||

|

|

ddc355feb6 | ||

|

|

90feccf33c | ||

|

|

06571e99aa | ||

|

|

53e5483daa | ||

|

|

cc4e5b26f0 | ||

|

|

e2a94d75b4 | ||

|

|

405ea74f16 | ||

|

|

3a0f31fe89 | ||

|

|

852706cd6b | ||

|

|

eebd624baf | ||

|

|

15fac746a8 | ||

|

|

7756c11454 | ||

|

|

5e8bfb576b | ||

|

|

3189b284c0 | ||

|

|

165755fb33 | ||

|

|

1cd2b0504a | ||

|

|

e1e3a903f9 | ||

|

|

996372b8f6 | ||

|

|

50c19ece53 | ||

|

|

f9668ede4a | ||

|

|

0804fc7a3a | ||

|

|

55fb7656df | ||

|

|

8406010260 | ||

|

|

b35c64b6c0 | ||

|

|

0d967f93ba | ||

|

|

0809f9aef6 | ||

|

|

bb61250bfe | ||

|

|

0168343b76 | ||

|

|

474e6705e6 | ||

|

|

53bfa7931d | ||

|

|

8c46d19071 | ||

|

|

3599d18ff6 | ||

|

|

b7e4dea6c5 | ||

|

|

40c9abc7e1 | ||

|

|

6a15d36d14 | ||

|

|

d77ce468ea | ||

|

|

03815cb81b | ||

|

|

d62273294d | ||

|

|

017fd03180 | ||

|

|

616bf315cb | ||

|

|

fda8248d41 | ||

|

|

6da7a98857 | ||

|

|

5e914d5756 | ||

|

|

f631ae911b | ||

|

|

6bdf9c2a94 | ||

|

|

91f9818ae3 | ||

|

|

d7770c507b | ||

|

|

76cae8e8e3 | ||

|

|

575b4ead1a | ||

|

|

14a859c190 | ||

|

|

61040c9f8e | ||

|

|

0b0688a91e | ||

|

|

121edc3e42 | ||

|

|

36f7315481 | ||

|

|

c5de0c49e4 | ||

|

|

4d472a0ea1 | ||

|

|

8f32fa5cb3 | ||

|

|

f9e2e87346 | ||

|

|

ec40e79362 | ||

|

|

e2e6c790be | ||

|

|

4a5ed5a273 | ||

|

|

14110bd5ca | ||

|

|

c391ca08de | ||

|

|

29d8aeb9b3 | ||

|

|

3c62df6b86 | ||

|

|

6bb342f23a | ||

|

|

01a68e1060 | ||

|

|

1ffee96bad | ||

|

|

d5fd1f9c38 | ||

|

|

848a5d85c6 | ||

|

|

d7901132b8 | ||

|

|

dca639cf26 | ||

|

|

11603e70c9 | ||

|

|

35adeb6412 | ||

|

|

850f5d3842 | ||

|

|

9923462907 | ||

|

|

46a214e41a | ||

|

|

fdca583c67 | ||

|

|

29c38e0623 | ||

|

|

a56ee4ee94 | ||

|

|

cb2f89bca6 | ||

|

|

43b8b0a083 | ||

|

|

71f314d4c4 | ||

|

|

ee0b9e3a5c | ||

|

|

5e4b3882e6 | ||

|

|

4030a5df8e | ||

|

|

e67d29cd2f | ||

|

|

70966c8a8f | ||

|

|

8fd245c28b | ||

|

|

43c871f2f4 | ||

|

|

390e600f55 | ||

|

|

40c7caac16 | ||

|

|

7619fd08d6 | ||

|

|

dff83ef620 | ||

|

|

56652c2b39 | ||

|

|

c981ad4608 | ||

|

|

75a248cf42 | ||

|

|

2e1ed132f7 | ||

|

|

c9761f4736 | ||

|

|

9c65fad73f | ||

|

|

4b70e03daa | ||

|

|

fdfa94bcc3 | ||

|

|

f816c15e1e | ||

|

|

3a06337601 | ||

|

|

9ba11f7bcc | ||

|

|

76827b31a9 | ||

|

|

0a801c0223 | ||

|

|

1a5c3c587d | ||

|

|

ab6a306e07 | ||

|

|

2c7c5f9a6e | ||

|

|

eb47c74096 | ||

|

|

76f87377ba | ||

|

|

e8f8cd9d36 | ||

|

|

7142394121 | ||

|

|

ad3c01736e | ||

|

|

2218313f5c | ||

|

|

2e67e2f911 | ||

|

|

dce9fdd0e4 | ||

|

|

8fb743b91d | ||

|

|

dd32127014 | ||

|

|

3c2ba99fc4 | ||

|

|

a9c7ad8a0f | ||

|

|

1ddd5f1901 | ||

|

|

88f8cbe172 | ||

|

|

b211a5156f | ||

|

|

a547001601 | ||

|

|

d4dd026310 | ||

|

|

3cb15a2a54 | ||

|

|

c550cd8b0d | ||

|

|

6a7ffd5483 | ||

|

|

d265b8adb6 | ||

|

|

7eacb847b0 | ||

|

|

ac40ae89b9 | ||

|

|

381d64833d | ||

|

|

d9b79d94e4 | ||

|

|

66800c7a45 | ||

|

|

f8f25e36ef | ||

|

|

15d049cffe | ||

|

|

ca281c5722 | ||

|

|

9534d6cca1 | ||

|

|

cab8f517b4 | ||

|

|

4b26b6aaec | ||

|

|

3c2e314ee5 | ||

|

|

e6c5e737a2 | ||

|

|

bf19055e53 | ||

|

|

2451ed8c88 | ||

|

|

5007024f63 | ||

|

|

de79192432 | ||

|

|

057be50941 | ||

|

|

4eb6e80b4f | ||

|

|

c00a7b65af | ||

|

|

0b806af487 | ||

|

|

82c5a6b29d | ||

|

|

ea9b68badd | ||

|

|

99f6c75c40 | ||

|

|

e2948857bf | ||

|

|

767de555a6 | ||

|

|

73043f2ccc | ||

|

|

55cda53325 | ||

|

|

a96dce0f8f | ||

|

|

05922e9ebc | ||

|

|

4affa75ff5 | ||

|

|

963dc0221c | ||

|

|

35316ec068 | ||

|

|

6547f3aadb | ||

|

|

04cb49b7e4 | ||

|

|

786bc36163 | ||

|

|

79107fd062 | ||

|

|

8369d5bedd | ||

|

|

c0ff554d5b | ||

|

|

f709222943 | ||

|

|

c499bb051f | ||

|

|

a790bad1e4 | ||

|

|

27bea580d4 | ||

|

|

d6b8801f41 | ||

|

|

e8c0dcf9f3 | ||

|

|

f2762e3b4b | ||

|

|

16b4a5b71f | ||

|

|

15a971346d | ||

|

|

7d41542f93 | ||

|

|

fea39254d9 | ||

|

|

b37c31cc21 | ||

|

|

4ac6ef2972 | ||

|

|

ace951bf7e | ||

|

|

eb4adeab4d | ||

|

|

45c47bda60 | ||

|

|

afd8e85835 | ||

|

|

833d25bda0 | ||

|

|

0b0dd8dd80 | ||

|

|

c57db0a330 | ||

|

|

f5087a82dc | ||

|

|

7fe8b7661d | ||

|

|

34a44b9dd2 | ||

|

|

66edbcd3d5 | ||

|

|

7523ed825e | ||

|

|

3549176370 | ||

|

|

88845f6d88 | ||

|

|

eee337c764 | ||

|

|

9b3b08a2bb | ||

|

|

bac4ced382 | ||

|

|

ea537b32c7 | ||

|

|

70adf55643 | ||

|

|

0306f5ca13 | ||

|

|

cce8d1aa4d | ||

|

|

be6e0813db | ||

|

|

45f4f0f603 | ||

|

|

29d2f59f12 | ||

|

|

606f18e5c1 | ||

|

|

c285ad0e2b | ||

|

|

d950b0acbe | ||

|

|

5b4c649d43 | ||

|

|

e229902381 | ||

|

|

a20651efd8 | ||

|

|

d8df9fdccf | ||

|

|

8e2c7e1298 | ||

|

|

f323cbc769 | ||

|

|

b73fd0ac69 | ||

|

|

5bf021be2e | ||

|

|

eaa656f859 | ||

|

|

2b2967f34e | ||

|

|

7962092092 | ||

|

|

386d3e0353 | ||

|

|

ad8ff10a05 | ||

|

|

41052b4e1e | ||

|

|

8837e1937b | ||

|

|

d83b204f4b | ||

|

|

5d801ff287 | ||

|

|

23fa00e29a | ||

|

|

a937f36997 | ||

|

|

9366c1d36f | ||

|

|

e7c78529e9 | ||

|

|

b52fd0b4df | ||

|

|

2f1a2c1cd7 | ||

|

|

d59eac3321 | ||

|

|

f65df4901e | ||

|

|

a79032bf75 | ||

|

|

056047f635 | ||

|

|

3f72263278 | ||

|

|

cc6cae47ec | ||

|

|

4eb4753e20 | ||

|

|

9a068c0b14 | ||

|

|

24b02127ec | ||

|

|

e6affcc23e | ||

|

|

1ee08d22d2 | ||

|

|

0aa7162055 | ||

|

|

fe36b08fce | ||

|

|

ce365eb9e3 | ||

|

|

df1c36e5aa | ||

|

|

c59209a01a | ||

|

|

e7c5818d16 | ||

|

|

a875a7dc40 | ||

|

|

f64f2b1ad8 | ||

|

|

4eb29c8810 | ||

|

|

83dd453723 | ||

|

|

e54614fa2f | ||

|

|

eed0d67005 | ||

|

|

2a4d1e2d64 | ||

|

|

7870a86e9a | ||

|

|

0bf915054d | ||

|

|

c5a16e91fb | ||

|

|

a1d54f5ae0 | ||

|

|

a4a7c6536d | ||

|

|

3e7bf6a9ef | ||

|

|

b04fe5d4ee | ||

|

|

b8f9c3557b | ||

|

|

891fb87712 | ||

|

|

65fdebab75 | ||

|

|

c080571b7a | ||

|

|

24cf044646 | ||

|

|

8a501831d6 | ||

|

|

23c30dbc10 | ||

|

|

6193205012 | ||

|

|

43b7955fc2 | ||

|

|

682daa4e94 | ||

|

|

145faf9817 | ||

|

|

da970cca82 | ||

|

|

e1c6cf5f91 | ||

|

|

537d10c627 | ||

|

|

3e66275c98 | ||

|

|

023f817179 | ||

|

|

ff531c416f | ||

|

|

d79983c791 | ||

|

|

7593339c14 | ||

|

|

b79d4e8876 | ||

|

|

7486d9d9e2 | ||

|

|

b2968df5dc | ||

|

|

7ff3258607 | ||

|

|

35bed842cb | ||

|

|

21e6c14e1e | ||

|

|

f5c2930889 | ||

|

|

2873ca6d38 | ||

|

|

9e4c68a5b4 | ||

|

|

43f726ba8f | ||

|

|

edd474e663 | ||

|

|

22b9805e47 | ||

|

|

3adda84b96 | ||

|

|

d6773bc32c | ||

|

|

a8ee77cd5e | ||

|

|

58b5abbaa6 | ||

|

|

31ae2b3060 | ||

|

|

8c03ebb78f | ||

|

|

255d35976e | ||

|

|

80c6190c05 | ||

|

|

ae1ede58da | ||

|

|

a1a09a802b | ||

|

|

9488e8992d | ||

|

|

059c285425 | ||

|

|

7f3853bbcd | ||

|

|

904f094b80 | ||

|

|

23e089061b | ||

|

|

0a713faca8 | ||

|

|

f1a72e448a | ||

|

|

3f68c3b68e | ||

|

|

502404c0cc | ||

|

|

7f4161ff78 | ||

|

|

07ec3b27fe | ||

|

|

b0d2d13eb1 | ||

|

|

42ae8ba6fb | ||

|

|

e1c068ca66 | ||

|

|

5c4014ee62 | ||

|

|

dede128648 | ||

|

|

ee3cdd0ffe | ||

|

|

063fc5174d | ||

|

|

34b1231df3 | ||

|

|

b88dfe4297 | ||

|

|

bb1e1a9680 | ||

|

|

2b79398dba | ||

|

|

f6e2c2c0da | ||

|

|

cc3ec279c2 | ||

|

|

734803aa44 | ||

|

|

596aeec652 | ||

|

|

eb5fe9e3ae | ||

|

|

66497c28e8 | ||

|

|

c28cdc3d86 | ||

|

|

8973554595 | ||

|

|

26d5b22974 | ||

|

|

7f5650699e | ||

|

|

34657639f8 | ||

|

|

ff9dcfe789 | ||

|

|

40f63ae51c | ||

|

|

f819fafa1c | ||

|

|

27019339b5 | ||

|

|

3587bd82e1 | ||

|

|

af0cc21af9 | ||

|

|

e3beaae8be | ||

|

|

0b5544ef9e | ||

|

|

42d95af829 | ||

|

|

938a66511a | ||

|

|

3692fcd3d5 | ||

|

|

ce3bfd59f5 | ||

|

|

b7388557a9 | ||

|

|

bdb904e714 | ||

|

|

1315d02437 | ||

|

|

26d394ca74 | ||

|

|

ea8fda0dee | ||

|

|

1ff1e3b43d | ||

|

|

f006978caf | ||

|

|

681ef13174 | ||

|

|

97abcf4b32 | ||

|

|

963cc17c18 | ||

|

|

0d388b561b | ||

|

|

2df42a3035 | ||

|

|

6bd5535d6c | ||

|

|

0e158b66b0 | ||

|

|

c499a92f57 | ||

|

|

0138114fc2 | ||

|

|

c3e3188c6a | ||

|

|

843bf0631e | ||

|

|

b3acfb3c6f | ||

|

|

2cf17e04be | ||

|

|

c5ecf94177 | ||

|

|

46ea135b6b | ||

|

|

219363fffb | ||

|

|

56a73575a1 | ||

|

|

1fae6c9ef7 | ||

|

|

67eb94c69d | ||

|

|

89eacf2f47 | ||

|

|

5e18e51ce0 | ||

|

|

a3d9384bc0 | ||

|

|

0a95ef6ab2 | ||

|

|

363098d32d | ||

|

|

736f9f4972 | ||

|

|

d5486f17d8 | ||

|

|

2b61aa282a | ||

|

|

c41d4c4f45 | ||

|

|

37e4ede65c | ||

|

|

bb758da940 | ||

|

|

c708dd3186 | ||

|

|

c81b960791 | ||

|

|

db66b82f6f | ||

|

|

7b9439f2e4 | ||

|

|

d1d451c27e | ||

|

|

8664e8f9a3 | ||

|

|

34684ec86a | ||

|

|

c6bf6779f8 | ||

|

|

bb7ffd8fbe | ||

|

|

0585b378b3 | ||

|

|

6e8f24f6a7 | ||

|

|

8d46e16c46 | ||

|

|

1cd8ebc8c8 | ||

|

|

6fd003c655 | ||

|

|

b022680962 | ||

|

|

7cd0f8a7b1 | ||

|

|

905b24bd4d | ||

|

|

a2a8e4fdc7 | ||

|

|

99aea454b5 | ||

|

|

f2e2e57237 | ||

|

|

fb7c0792c0 | ||

|

|

76637d3939 | ||

|

|

2e65a1793d | ||

|

|

a1048fb619 | ||

|

|

9607d04279 | ||

|

|

d09b462930 | ||

|

|

c8e0fc926d | ||

|

|

a793cf8f05 | ||

|

|

528509f809 | ||

|

|

860a15ff40 | ||

|

|

1913565507 | ||

|

|

c54919e4ce | ||

|

|

d6c452a93e | ||

|

|

f5183df0f1 | ||

|

|

bd65236e17 | ||

|

|

36d95a3a30 | ||

|

|

f8e9dc0650 | ||

|

|

66621d6723 | ||

|

|

f015985062 | ||

|

|

f1474cea7a | ||

|

|

5c5b9534c1 | ||

|

|

dd1b84f938 | ||

|

|

a8b4066f85 | ||

|

|

9e44d69774 | ||

|

|

9fc21686ed | ||

|

|

748055892c | ||

|

|

47c116a423 | ||

|

|

4fc6857d87 | ||

|

|

2cb8eecf18 | ||

|

|

e21f6a7787 | ||

|

|

36e514e825 | ||

|

|

8198bfd997 | ||

|

|

008ee14889 | ||

|

|

3d36d35e30 | ||

|

|

86af3fe0e7 | ||

|

|

80dcd88abf | ||

|

|

9e94d28860 | ||

|

|

e5759d950b | ||

|

|

f4296173e9 | ||

|

|

717df891b1 | ||

|

|

a8022c104a | ||

|

|

a7029e35b5 | ||

|

|

9b3e5faebe | ||

|

|

22bd5556ed | ||

|

|

178c2014b0 | ||

|

|

a4f5811a5b | ||

|

|

aae233bd6c | ||

|

|

f653ace24b | ||

|

|

b08c0888bb | ||

|

|

b03c7b514d | ||

|

|

e9a7b68bc1 | ||

|

|

a0b25938f4 | ||

|

|

a8f064a8cb | ||

|

|

3020218096 | ||

|

|

00ff0c9b91 | ||

|

|

66715c5ba4 | ||

|

|

def71a0afe | ||

|

|

764f9449b4 | ||

|

|

29c2d1d189 | ||

|

|

99f7e44c30 | ||

|

|

2600ba4e74 | ||

|

|

630d201546 | ||

|

|

b40f8f88ac | ||

|

|

fc837c4daa | ||

|

|

706994340f | ||

|

|

ebab02fce3 | ||

|

|

cf001db396 | ||

|

|

18fd3bb333 | ||

|

|

9143e9ecb1 | ||

|

|

d60d0f64d2 | ||

|

|

116b58e97c | ||

|

|

a947a1316b | ||

|

|

3b14439240 | ||

|

|

c27e0a0a1b | ||

|

|

ec54b47b6e | ||

|

|

1c20fb7638 | ||

|

|

5767d652bf | ||

|

|

2a1368d508 | ||

|

|

bb1b283d95 | ||

|

|

111b04c9e6 | ||

|

|

64668b11da | ||

|

|

8e9384e8e6 | ||

|

|

80ebd8f875 | ||

|

|

64670726a6 | ||

|

|

9d13c87292 | ||

|

|

71a80cab3a | ||

|

|

8a3c2c6cad | ||

|

|

c299601ece | ||

|

|

5444f4ee6f | ||

|

|

891900c186 | ||

|

|

1fc041d0d6 | ||

|

|

ae463fcdf2 | ||

|

|

7c1838427f | ||

|

|

b2b503f043 | ||

|

|

8a6a6ec911 | ||

|

|

f374c9da70 | ||

|

|

044afdf7af | ||

|

|

340a97d1df | ||

|

|

fab197edf2 | ||

|

|

31cce741ac | ||

|

|

269630e755 | ||

|

|

c19be34e71 | ||

|

|

0958c06b84 | ||

|

|

c3b0f6b64b | ||

|

|

0f499469fc | ||

|

|

e66e1317dc | ||

|

|

54450bcb8c | ||

|

|

35ec657ef1 | ||

|

|

a5beacbdd0 | ||

|

|

bfcb8c9b82 | ||

|

|

a69b8d2fb6 | ||

|

|

2dd655eda0 | ||

|

|

ad81cd280f | ||

|

|

162a517411 | ||

|

|

5f0c79cc47 | ||

|

|

4e5a8ce87e | ||

|

|

5080245a73 | ||

|

|

0756027e33 | ||

|

|

5f7b19cb56 | ||

|

|

69b79cd799 | ||

|

|

1ae74c1197 | ||

|

|

77a22a6b1c | ||

|

|

74b309cf50 | ||

|

|

30cc8e92a1 | ||

|

|

df48399a90 | ||

|

|

511afbcd9d | ||

|

|

f71b2624ab | ||

|

|

30d6eeffd0 | ||

|

|

b58e811b14 | ||

|

|

af1a5e0449 | ||

|

|

3221726d85 | ||

|

|

ab91758c7e | ||

|

|

4a7515e66a | ||

|

|

1436bc1a70 | ||

|

|

f43ae0ea43 | ||

|

|

d79b90a98f | ||

|

|

45b328af2e | ||

|

|

bfc7898654 | ||

|

|

c9498d0117 | ||

|

|

277e07589e | ||

|

|

eca8d16c61 | ||

|

|

f5f599c7f0 | ||

|

|

26648e54cc | ||

|

|

dc0c1bf87d | ||

|

|

149704e748 | ||

|

|

6fdcf3a10a | ||

|

|

2da284b921 | ||

|

|

68a97a898d | ||

|

|

108903f7f0 | ||

|

|

70bac41d89 | ||

|

|

182a6f475d | ||

|

|

5b3eaa3003 | ||

|

|

d11c44940e | ||

|

|

2d9be6dace | ||

|

|

29f1edbde7 | ||

|

|

495708df76 | ||

|

|

2bed0eab0c | ||

|

|

25c74e26d1 | ||

|

|

1a37c6ff42 | ||

|

|

ae01afdd0f | ||

|

|

496bf84e3a | ||

|

|

dbecc097df | ||

|

|

10cbb5e67c | ||

|

|

86ad5dd02a | ||

|

|

dac9931b4a | ||

|

|

5d9aee6b7e | ||

|

|

e8803477df | ||

|

|

b73f770955 | ||

|

|

b2f33944ec | ||

|

|

5c82cce06c | ||

|

|

ce035a5947 | ||

|

|

2705096ce6 | ||

|

|

091cb4fb8d | ||

|

|

eb996a152a | ||

|

|

65ab6d2468 | ||

|

|

8e1cdb9103 | ||

|

|

88c8fe5570 | ||

|

|

7a57629918 | ||

|

|

3f64c6307f | ||

|

|

1e2523af61 | ||

|

|

52d510c331 | ||

|

|

4c74601073 | ||

|

|

59397cdd19 | ||

|

|

b83cd95a02 | ||

|

|

c1b10bbb91 | ||

|

|

46993ce3ae | ||

|

|

2a6efab8a2 | ||

|

|

38dffe1ed6 | ||

|

|

24ce90ba9b | ||

|

|

541ee436c5 | ||

|

|

725706beab | ||

|

|

24cb40fe98 | ||

|

|

e3c298e892 | ||

|

|

67dd9be95a | ||

|

|

bd07d356bd | ||

|

|

71ae92274d | ||

|

|

49c1b310c2 | ||

|

|

ba28fa6c3c | ||

|

|

9de0652b2c | ||

|

|

13dc0a0b4d | ||

|

|

3f25fb2139 | ||

|

|

093bea4230 | ||

|

|

73aafb886b | ||

|

|

4990534bf4 | ||

|

|

3d730661ee | ||

|

|

a0e27d82aa | ||

|

|

04c51d2d1a | ||

|

|

7160f9085a | ||

|

|

f9244aad92 | ||

|

|

4e43194dfe | ||

|

|

582e30bca6 | ||

|

|

910addd02b | ||

|

|

995c48b642 | ||

|

|

2cedbe5704 | ||

|

|

9d205132d0 | ||

|

|

8c19953cdd | ||

|

|

53a2f55cf0 | ||

|

|

8b5d454b50 | ||

|

|

e49b3ef051 | ||

|

|

f6a7e6b785 | ||

|

|

11d447cd5a | ||

|

|

4262f84744 | ||

|

|

3be2afdd88 | ||

|

|

ad0c5d9440 | ||

|

|

f9977c26e7 | ||

|

|

09089b160e | ||

|

|

17650d7e60 | ||

|

|

eb23170c43 | ||

|

|

bc5048e4f3 | ||

|

|

4444259078 | ||

|

|

5ff2261b74 | ||

|

|

9bc6bbe472 | ||

|

|

e8aec967dd | ||

|

|

086cc6be93 | ||

|

|

4de0fdbfca | ||

|

|

6623192108 | ||

|

|

737bdfe844 | ||

|

|

144e4da96e | ||

|

|

b0a8bf3025 | ||

|

|

4942d73693 | ||

|

|

845f960a4e | ||

|

|

fc201bb4ff | ||

|

|

420836b1b2 | ||

|

|

7c79d937e0 | ||

|

|

b7cada1edd | ||

|

|

9e199165b4 | ||

|

|

6ff3b178b0 | ||

|

|

0f943c482b | ||

|

|

76558f284f | ||

|

|

cea4f663d5 | ||

|

|

d24ee9032a | ||

|

|

d9f838a65f | ||

|

|

3166739ec9 | ||

|

|

95e009b9cb | ||

|

|

2cac1b7dcc | ||

|

|

541147c801 | ||

|

|

17da4ca099 | ||

|

|

698c25f133 | ||

|

|

d65b64a46f | ||

|

|

237d116d8c | ||

|

|

452f44206a | ||

|

|

bf5799ef9e | ||

|

|

f8a7fdd5ed | ||

|

|

317c1e0746 | ||

|

|

76c545ba0d | ||

|

|

e5d4f7766e | ||

|

|

16b6b08227 | ||

|

|

c8e4687833 | ||

|

|

178240aa6c | ||

|

|

47a6ef4f00 | ||

|

|

da0688b6aa | ||

|

|

3c8387ab61 |

74

.github/workflows/ci.yml

vendored

@@ -13,20 +13,24 @@ on:

|

||||

schedule:

|

||||

- cron: '0 5 * * 4'

|

||||

|

||||

concurrency:

|

||||

group: ${{ github.workflow }}-${{ github.ref }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

build_linux:

|

||||

|

||||

runs-on: ${{ matrix.os }}

|

||||

strategy:

|

||||

matrix:

|

||||

os: [ ubuntu-18.04, ubuntu-20.04 ]

|

||||

os: [ ubuntu-18.04, ubuntu-20.04, ubuntu-22.04 ]

|

||||

python-version: ["3.8", "3.9", "3.10"]

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v3

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

|

||||

@@ -62,15 +66,15 @@ jobs:

|

||||

- name: Tests

|

||||

run: |

|

||||

pytest --random-order --cov=freqtrade --cov-config=.coveragerc

|

||||

if: matrix.python-version != '3.9'

|

||||

if: matrix.python-version != '3.9' || matrix.os != 'ubuntu-22.04'

|

||||

|

||||

- name: Tests incl. ccxt compatibility tests

|

||||

run: |

|

||||

pytest --random-order --cov=freqtrade --cov-config=.coveragerc --longrun

|

||||

if: matrix.python-version == '3.9'

|

||||

if: matrix.python-version == '3.9' && matrix.os == 'ubuntu-22.04'

|

||||

|

||||

- name: Coveralls

|

||||

if: (runner.os == 'Linux' && matrix.python-version == '3.8')

|

||||

if: (runner.os == 'Linux' && matrix.python-version == '3.9')

|

||||

env:

|

||||

# Coveralls token. Not used as secret due to github not providing secrets to forked repositories

|

||||

COVERALLS_REPO_TOKEN: 6D1m0xupS3FgutfuGao8keFf9Hc0FpIXu

|

||||

@@ -78,11 +82,13 @@ jobs:

|

||||

# Allow failure for coveralls

|

||||

coveralls || true

|

||||

|

||||

- name: Backtesting

|

||||

- name: Backtesting (multi)

|

||||

run: |

|

||||

cp config_examples/config_bittrex.example.json config.json

|

||||

freqtrade create-userdir --userdir user_data

|

||||

freqtrade backtesting --datadir tests/testdata --strategy SampleStrategy

|

||||

freqtrade new-strategy -s AwesomeStrategy

|

||||

freqtrade new-strategy -s AwesomeStrategyMin --template minimal

|

||||

freqtrade backtesting --datadir tests/testdata --strategy-list AwesomeStrategy AwesomeStrategyMin -i 5m

|

||||

|

||||

- name: Hyperopt

|

||||

run: |

|

||||

@@ -121,7 +127,7 @@ jobs:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v3

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

|

||||

@@ -157,29 +163,15 @@ jobs:

|

||||

pip install -e .

|

||||

|

||||

- name: Tests

|

||||

if: (runner.os != 'Linux' || matrix.python-version != '3.8')

|

||||

run: |

|

||||

pytest --random-order

|

||||

|

||||

- name: Tests (with cov)

|

||||

if: (runner.os == 'Linux' && matrix.python-version == '3.8')

|

||||

run: |

|

||||

pytest --random-order --cov=freqtrade --cov-config=.coveragerc

|

||||

|

||||

- name: Coveralls

|

||||

if: (runner.os == 'Linux' && matrix.python-version == '3.8')

|

||||

env:

|

||||

# Coveralls token. Not used as secret due to github not providing secrets to forked repositories

|

||||

COVERALLS_REPO_TOKEN: 6D1m0xupS3FgutfuGao8keFf9Hc0FpIXu

|

||||

run: |

|

||||

# Allow failure for coveralls

|

||||

coveralls -v || true

|

||||

|

||||

- name: Backtesting

|

||||

run: |

|

||||

cp config_examples/config_bittrex.example.json config.json

|

||||

freqtrade create-userdir --userdir user_data

|

||||

freqtrade backtesting --datadir tests/testdata --strategy SampleStrategy

|

||||

freqtrade new-strategy -s AwesomeStrategyAdv --template advanced

|

||||

freqtrade backtesting --datadir tests/testdata --strategy AwesomeStrategyAdv

|

||||

|

||||

- name: Hyperopt

|

||||

run: |

|

||||

@@ -219,7 +211,7 @@ jobs:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v3

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

|

||||

@@ -271,9 +263,9 @@ jobs:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v3

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: 3.9

|

||||

python-version: "3.10"

|

||||

|

||||

- name: pre-commit dependencies

|

||||

run: |

|

||||

@@ -290,9 +282,9 @@ jobs:

|

||||

./tests/test_docs.sh

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v3

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: 3.9

|

||||

python-version: "3.10"

|

||||

|

||||

- name: Documentation build

|

||||

run: |

|

||||

@@ -308,18 +300,6 @@ jobs:

|

||||

details: Freqtrade doc test failed!

|

||||

webhookUrl: ${{ secrets.DISCORD_WEBHOOK }}

|

||||

|

||||

cleanup-prior-runs:

|

||||

permissions:

|

||||

actions: write # for rokroskar/workflow-run-cleanup-action to obtain workflow name & cancel it

|

||||

contents: read # for rokroskar/workflow-run-cleanup-action to obtain branch

|

||||

runs-on: ubuntu-20.04

|

||||

steps:

|

||||

- name: Cleanup previous runs on this branch

|

||||

uses: rokroskar/workflow-run-cleanup-action@v0.3.3

|

||||

if: "!startsWith(github.ref, 'refs/tags/') && github.ref != 'refs/heads/stable' && github.repository == 'freqtrade/freqtrade'"

|

||||

env:

|

||||

GITHUB_TOKEN: "${{ secrets.GITHUB_TOKEN }}"

|

||||

|

||||

# Notify only once - when CI completes (and after deploy) in case it's successfull

|

||||

notify-complete:

|

||||

needs: [ build_linux, build_macos, build_windows, docs_check, mypy_version_check ]

|

||||

@@ -327,7 +307,7 @@ jobs:

|

||||

# Discord notification can't handle schedule events

|

||||

if: (github.event_name != 'schedule')

|

||||

permissions:

|

||||

repository-projects: read

|

||||

repository-projects: read

|

||||

steps:

|

||||

|

||||

- name: Check user permission

|

||||

@@ -356,9 +336,9 @@ jobs:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v3

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: 3.8

|

||||

python-version: "3.9"

|

||||

|

||||

- name: Extract branch name

|

||||

shell: bash

|

||||

@@ -371,7 +351,7 @@ jobs:

|

||||

python setup.py sdist bdist_wheel

|

||||

|

||||

- name: Publish to PyPI (Test)

|

||||

uses: pypa/gh-action-pypi-publish@master

|

||||

uses: pypa/gh-action-pypi-publish@v1.5.1

|

||||

if: (github.event_name == 'release')

|

||||

with:

|

||||

user: __token__

|

||||

@@ -379,7 +359,7 @@ jobs:

|

||||

repository_url: https://test.pypi.org/legacy/

|

||||

|

||||

- name: Publish to PyPI

|

||||

uses: pypa/gh-action-pypi-publish@master

|

||||

uses: pypa/gh-action-pypi-publish@v1.5.1

|

||||

if: (github.event_name == 'release')

|

||||

with:

|

||||

user: __token__

|

||||

@@ -419,7 +399,7 @@ jobs:

|

||||

|

||||

- name: Discord notification

|

||||

uses: rjstone/discord-webhook-notify@v1

|

||||

if: always() && ( github.event_name != 'pull_request' || github.event.pull_request.head.repo.fork == false)

|

||||

if: always() && ( github.event_name != 'pull_request' || github.event.pull_request.head.repo.fork == false) && (github.event_name != 'schedule')

|

||||

with:

|

||||

severity: info

|

||||

details: Deploy Succeeded!

|

||||

|

||||

8

.gitignore

vendored

@@ -7,10 +7,15 @@ logfile.txt

|

||||

user_data/*

|

||||

!user_data/strategy/sample_strategy.py

|

||||

!user_data/notebooks

|

||||

!user_data/models

|

||||

!user_data/freqaimodels

|

||||

user_data/freqaimodels/*

|

||||

user_data/models/*

|

||||

user_data/notebooks/*

|

||||

freqtrade-plot.html

|

||||

freqtrade-profit-plot.html

|

||||

freqtrade/rpc/api_server/ui/*

|

||||

build_helpers/ta-lib/*

|

||||

|

||||

# Macos related

|

||||

.DS_Store

|

||||

@@ -80,6 +85,8 @@ instance/

|

||||

|

||||

# Sphinx documentation

|

||||

docs/_build/

|

||||

# Mkdocs documentation

|

||||

site/

|

||||

|

||||

# PyBuilder

|

||||

target/

|

||||

@@ -105,3 +112,4 @@ target/

|

||||

!config_examples/config_ftx.example.json

|

||||

!config_examples/config_full.example.json

|

||||

!config_examples/config_kraken.example.json

|

||||

!config_examples/config_freqai.example.json

|

||||

|

||||

@@ -13,11 +13,11 @@ repos:

|

||||

- id: mypy

|

||||

exclude: build_helpers

|

||||

additional_dependencies:

|

||||

- types-cachetools==5.0.1

|

||||

- types-filelock==3.2.5

|

||||

- types-requests==2.27.20

|

||||

- types-tabulate==0.8.7

|

||||

- types-python-dateutil==2.8.12

|

||||

- types-cachetools==5.2.1

|

||||

- types-filelock==3.2.7

|

||||

- types-requests==2.28.9

|

||||

- types-tabulate==0.8.11

|

||||

- types-python-dateutil==2.8.19

|

||||

# stages: [push]

|

||||

|

||||

- repo: https://github.com/pycqa/isort

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

FROM python:3.9.9-slim-bullseye as base

|

||||

FROM python:3.10.6-slim-bullseye as base

|

||||

|

||||

# Setup env

|

||||

ENV LANG C.UTF-8

|

||||

@@ -11,7 +11,7 @@ ENV FT_APP_ENV="docker"

|

||||

# Prepare environment

|

||||

RUN mkdir /freqtrade \

|

||||

&& apt-get update \

|

||||

&& apt-get -y install sudo libatlas3-base curl sqlite3 libhdf5-serial-dev \

|

||||

&& apt-get -y install sudo libatlas3-base curl sqlite3 libhdf5-serial-dev libgomp1 \

|

||||

&& apt-get clean \

|

||||

&& useradd -u 1000 -G sudo -U -m -s /bin/bash ftuser \

|

||||

&& chown ftuser:ftuser /freqtrade \

|

||||

|

||||

11

README.md

@@ -9,10 +9,6 @@ Freqtrade is a free and open source crypto trading bot written in Python. It is

|

||||

|

||||

|

||||

|

||||

## Sponsored promotion

|

||||

|

||||

[](https://tokenbot.com/?utm_source=github&utm_medium=freqtrade&utm_campaign=algodevs)

|

||||

|

||||

## Disclaimer

|

||||

|

||||

This software is for educational purposes only. Do not risk money which

|

||||

@@ -39,7 +35,7 @@ Please read the [exchange specific notes](docs/exchanges.md) to learn about even

|

||||

- [X] [OKX](https://okx.com/) (Former OKEX)

|

||||

- [ ] [potentially many others](https://github.com/ccxt/ccxt/). _(We cannot guarantee they will work)_

|

||||

|

||||

### Experimentally, freqtrade also supports futures on the following exchanges

|

||||

### Supported Futures Exchanges (experimental)

|

||||

|

||||

- [X] [Binance](https://www.binance.com/)

|

||||

- [X] [Gate.io](https://www.gate.io/ref/6266643)

|

||||

@@ -67,6 +63,7 @@ Please find the complete documentation on the [freqtrade website](https://www.fr

|

||||

- [x] **Dry-run**: Run the bot without paying money.

|

||||

- [x] **Backtesting**: Run a simulation of your buy/sell strategy.

|

||||

- [x] **Strategy Optimization by machine learning**: Use machine learning to optimize your buy/sell strategy parameters with real exchange data.

|

||||

- [X] **Adaptive prediction modeling**: Build a smart strategy with FreqAI that self-trains to the market via adaptive machine learning methods. [Learn more](https://www.freqtrade.io/en/stable/freqai/)

|

||||

- [x] **Edge position sizing** Calculate your win rate, risk reward ratio, the best stoploss and adjust your position size before taking a position for each specific market. [Learn more](https://www.freqtrade.io/en/stable/edge/).

|

||||

- [x] **Whitelist crypto-currencies**: Select which crypto-currency you want to trade or use dynamic whitelists.

|

||||

- [x] **Blacklist crypto-currencies**: Select which crypto-currency you want to avoid.

|

||||

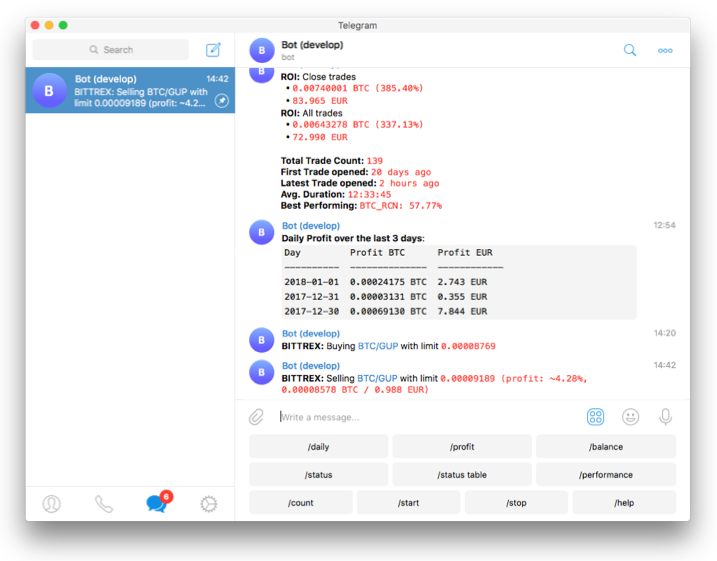

@@ -133,7 +130,7 @@ Telegram is not mandatory. However, this is a great way to control your bot. Mor

|

||||

|

||||

- `/start`: Starts the trader.

|

||||

- `/stop`: Stops the trader.

|

||||

- `/stopbuy`: Stop entering new trades.

|

||||

- `/stopentry`: Stop entering new trades.

|

||||

- `/status <trade_id>|[table]`: Lists all or specific open trades.

|

||||

- `/profit [<n>]`: Lists cumulative profit from all finished trades, over the last n days.

|

||||

- `/forceexit <trade_id>|all`: Instantly exits the given trade (Ignoring `minimum_roi`).

|

||||

@@ -197,7 +194,7 @@ Issues labeled [good first issue](https://github.com/freqtrade/freqtrade/labels/

|

||||

|

||||

The clock must be accurate, synchronized to a NTP server very frequently to avoid problems with communication to the exchanges.

|

||||

|

||||

### Min hardware required

|

||||

### Minimum hardware required

|

||||

|

||||

To run this bot we recommend you a cloud instance with a minimum of:

|

||||

|

||||

|

||||

@@ -4,7 +4,7 @@ else

|

||||

INSTALL_LOC=${1}

|

||||

fi

|

||||

echo "Installing to ${INSTALL_LOC}"

|

||||

if [ ! -f "${INSTALL_LOC}/lib/libta_lib.a" ]; then

|

||||

if [ -n "$2" ] || [ ! -f "${INSTALL_LOC}/lib/libta_lib.a" ]; then

|

||||

tar zxvf ta-lib-0.4.0-src.tar.gz

|

||||

cd ta-lib \

|

||||

&& sed -i.bak "s|0.00000001|0.000000000000000001 |g" src/ta_func/ta_utility.h \

|

||||

@@ -17,11 +17,17 @@ if [ ! -f "${INSTALL_LOC}/lib/libta_lib.a" ]; then

|

||||

cd .. && rm -rf ./ta-lib/

|

||||

exit 1

|

||||

fi

|

||||

which sudo && sudo make install || make install

|

||||

if [ -x "$(command -v apt-get)" ]; then

|

||||

echo "Updating library path using ldconfig"

|

||||

sudo ldconfig

|

||||

if [ -z "$2" ]; then

|

||||

which sudo && sudo make install || make install

|

||||

if [ -x "$(command -v apt-get)" ]; then

|

||||

echo "Updating library path using ldconfig"

|

||||

sudo ldconfig

|

||||

fi

|

||||

else

|

||||

# Don't install with sudo

|

||||

make install

|

||||

fi

|

||||

|

||||

cd .. && rm -rf ./ta-lib/

|

||||

else

|

||||

echo "TA-lib already installed, skipping installation"

|

||||

|

||||

@@ -6,10 +6,12 @@ export DOCKER_BUILDKIT=1

|

||||

# Replace / with _ to create a valid tag

|

||||

TAG=$(echo "${BRANCH_NAME}" | sed -e "s/\//_/g")

|

||||

TAG_PLOT=${TAG}_plot

|

||||

TAG_FREQAI=${TAG}_freqai

|

||||

TAG_PI="${TAG}_pi"

|

||||

|

||||

TAG_ARM=${TAG}_arm

|

||||

TAG_PLOT_ARM=${TAG_PLOT}_arm

|

||||

TAG_FREQAI_ARM=${TAG_FREQAI}_arm

|

||||

CACHE_IMAGE=freqtradeorg/freqtrade_cache

|

||||

|

||||

echo "Running for ${TAG}"

|

||||

@@ -38,8 +40,10 @@ fi

|

||||

docker tag freqtrade:$TAG_ARM ${CACHE_IMAGE}:$TAG_ARM

|

||||

|

||||

docker build --cache-from freqtrade:${TAG_ARM} --build-arg sourceimage=${CACHE_IMAGE} --build-arg sourcetag=${TAG_ARM} -t freqtrade:${TAG_PLOT_ARM} -f docker/Dockerfile.plot .

|

||||

docker build --cache-from freqtrade:${TAG_ARM} --build-arg sourceimage=${CACHE_IMAGE} --build-arg sourcetag=${TAG_ARM} -t freqtrade:${TAG_FREQAI_ARM} -f docker/Dockerfile.freqai .

|

||||

|

||||

docker tag freqtrade:$TAG_PLOT_ARM ${CACHE_IMAGE}:$TAG_PLOT_ARM

|

||||

docker tag freqtrade:$TAG_FREQAI_ARM ${CACHE_IMAGE}:$TAG_FREQAI_ARM

|

||||

|

||||

# Run backtest

|

||||

docker run --rm -v $(pwd)/config_examples/config_bittrex.example.json:/freqtrade/config.json:ro -v $(pwd)/tests:/tests freqtrade:${TAG_ARM} backtesting --datadir /tests/testdata --strategy-path /tests/strategy/strats/ --strategy StrategyTestV3

|

||||

@@ -53,6 +57,7 @@ docker images

|

||||

|

||||

# docker push ${IMAGE_NAME}

|

||||

docker push ${CACHE_IMAGE}:$TAG_PLOT_ARM

|

||||

docker push ${CACHE_IMAGE}:$TAG_FREQAI_ARM

|

||||

docker push ${CACHE_IMAGE}:$TAG_ARM

|

||||

|

||||

# Create multi-arch image

|

||||

@@ -66,6 +71,9 @@ docker manifest push -p ${IMAGE_NAME}:${TAG}

|

||||

docker manifest create ${IMAGE_NAME}:${TAG_PLOT} ${CACHE_IMAGE}:${TAG_PLOT_ARM} ${CACHE_IMAGE}:${TAG_PLOT}

|

||||

docker manifest push -p ${IMAGE_NAME}:${TAG_PLOT}

|

||||

|

||||

docker manifest create ${IMAGE_NAME}:${TAG_FREQAI} ${CACHE_IMAGE}:${TAG_FREQAI_ARM} ${CACHE_IMAGE}:${TAG_FREQAI}

|

||||

docker manifest push -p ${IMAGE_NAME}:${TAG_FREQAI}

|

||||

|

||||

# Tag as latest for develop builds

|

||||

if [ "${TAG}" = "develop" ]; then

|

||||

docker manifest create ${IMAGE_NAME}:latest ${CACHE_IMAGE}:${TAG_ARM} ${IMAGE_NAME}:${TAG_PI} ${CACHE_IMAGE}:${TAG}

|

||||

|

||||

@@ -5,6 +5,7 @@

|

||||

# Replace / with _ to create a valid tag

|

||||

TAG=$(echo "${BRANCH_NAME}" | sed -e "s/\//_/g")

|

||||

TAG_PLOT=${TAG}_plot

|

||||

TAG_FREQAI=${TAG}_freqai

|

||||

TAG_PI="${TAG}_pi"

|

||||

|

||||

PI_PLATFORM="linux/arm/v7"

|

||||

@@ -49,8 +50,10 @@ fi

|

||||

docker tag freqtrade:$TAG ${CACHE_IMAGE}:$TAG

|

||||

|

||||

docker build --cache-from freqtrade:${TAG} --build-arg sourceimage=${CACHE_IMAGE} --build-arg sourcetag=${TAG} -t freqtrade:${TAG_PLOT} -f docker/Dockerfile.plot .

|

||||

docker build --cache-from freqtrade:${TAG} --build-arg sourceimage=${CACHE_IMAGE} --build-arg sourcetag=${TAG} -t freqtrade:${TAG_FREQAI} -f docker/Dockerfile.freqai .

|

||||

|

||||

docker tag freqtrade:$TAG_PLOT ${CACHE_IMAGE}:$TAG_PLOT

|

||||

docker tag freqtrade:$TAG_FREQAI ${CACHE_IMAGE}:$TAG_FREQAI

|

||||

|

||||

# Run backtest

|

||||

docker run --rm -v $(pwd)/config_examples/config_bittrex.example.json:/freqtrade/config.json:ro -v $(pwd)/tests:/tests freqtrade:${TAG} backtesting --datadir /tests/testdata --strategy-path /tests/strategy/strats/ --strategy StrategyTestV3

|

||||

@@ -64,6 +67,7 @@ docker images

|

||||

|

||||

docker push ${CACHE_IMAGE}

|

||||

docker push ${CACHE_IMAGE}:$TAG_PLOT

|

||||

docker push ${CACHE_IMAGE}:$TAG_FREQAI

|

||||

docker push ${CACHE_IMAGE}:$TAG

|

||||

|

||||

|

||||

|

||||

96

config_examples/config_freqai.example.json

Normal file

@@ -0,0 +1,96 @@

|

||||

{

|

||||

"trading_mode": "futures",

|

||||

"margin_mode": "isolated",

|

||||

"max_open_trades": 5,

|

||||

"stake_currency": "USDT",

|

||||

"stake_amount": 200,

|

||||

"tradable_balance_ratio": 1,

|

||||

"fiat_display_currency": "USD",

|

||||

"dry_run": true,

|

||||

"timeframe": "3m",

|

||||

"dry_run_wallet": 1000,

|

||||

"cancel_open_orders_on_exit": true,

|

||||

"unfilledtimeout": {

|

||||

"entry": 10,

|

||||

"exit": 30

|

||||

},

|

||||

"exchange": {

|

||||

"name": "binance",

|

||||

"key": "",

|

||||

"secret": "",

|

||||

"ccxt_config": {

|

||||

"enableRateLimit": true

|

||||

},

|

||||

"ccxt_async_config": {

|

||||

"enableRateLimit": true,

|

||||

"rateLimit": 200

|

||||

},

|

||||

"pair_whitelist": [

|

||||

"1INCH/USDT",

|

||||

"ALGO/USDT"

|

||||

],

|

||||

"pair_blacklist": []

|

||||

},

|

||||

"entry_pricing": {

|

||||

"price_side": "same",

|

||||

"use_order_book": true,

|

||||

"order_book_top": 1,

|

||||